Unlocking the Power of Large Language Models (LLMs) for HS Classification

Large language models for HS classification are transforming the field of artificial intelligence, and we’re here to guide you through the process. Starting with the basics, we explain how neural networks serve as the foundation for LLMs. These networks encode text into numerical data and undergo rigorous training by adjusting the knobs, or parameters, until they produce accurate results. It’s like fine-tuning a radio station to perfection.

But it doesn’t stop there. We also explore the critical role of video cards in enhancing LLM performance. Originally developed for gaming, these powerful hardware components now accelerate neural network processing, enabling us to harness the full potential of LLMs.

With a solid understanding of LLMs in place, we move on to their application in HS classification. Quickcode is at the forefront of this technological revolution, leveraging state-of-the-art language models to simplify and expedite the HS code selection process.

Our software platform empowers HS classifiers by providing instant access to a wealth of relevant sources and references. By synthesizing information from schedules, notes, rulings, and explanatory notes, Quickcode guides you toward the correct classification in record time. Think of it as having a comprehensive classification assistant at your fingertips.

We understand the nuances and complexities involved in HS classification. That’s why Quickcode operates on a human-in-the-loop model. Our platform doesn’t replace HS classifiers; it enhances their capabilities by combining their expertise with the power of AI.

Curious to experience the transformation for yourself? Sign up for our no-obligation and no credit-card-required Free Plan at https://quickcode.ai. With our platform, you’ll enjoy the speed, accuracy, and convenience of AI-supported HS classification.

In sum, the concept of, “LLMs for HS Classification,” is the future of HS classification, which means major potential for importers and exporters. Don’t miss out on this exciting opportunity to optimize your HS code selection process!

Join us on this journey of innovation. Watch our video and prepare to unlock the full power of large language models for HS classification. If you have any questions, we’re here to provide the guidance and support you need. Let’s embrace the future together.

Video Transcript:

Timestamps

0:00 What is an LLM? (Large Language Model)

0:05 Starting with a Neural Network

1:15 Adding Video Cards

2:36 The First Successful Neural Network Language Model

6:37 LLM for HS Classification

7:55 Try Quickcode – Get Started Free

LLM for HS Classification: What is an LLM? (Large Language Model)

So, let’s talk about large language models because that’s what we’re interested in. That’s the genre of AI that we’re really interested in. How do you make one? Well you start with a neural network.

LLM for HS Classification: Starting with a Neural Network

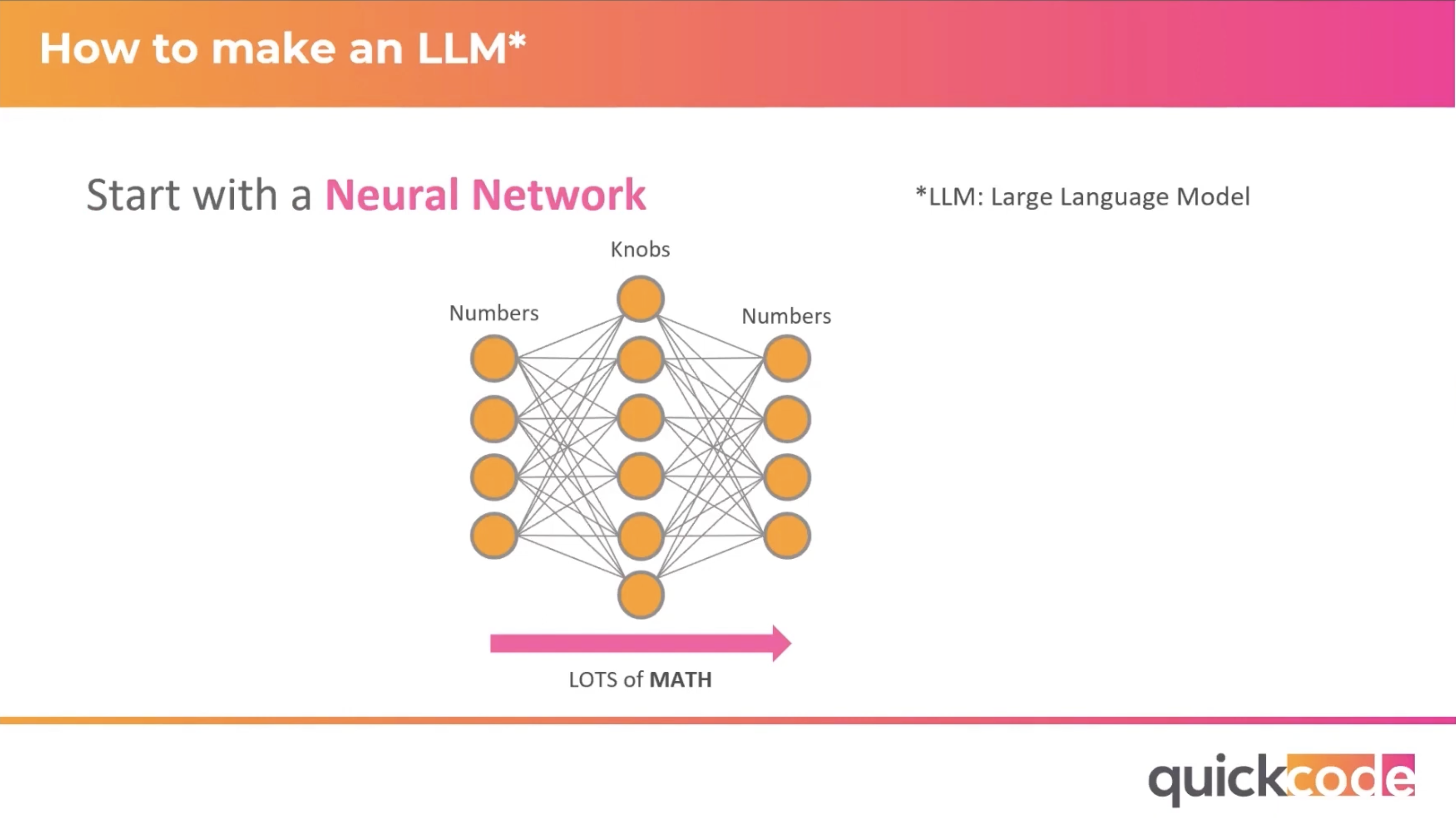

What’s a neural network? Here’s a picture of one. You start with numbers on the left which are the inputs and these numbers, you know, are really encoding for all kinds of different things. It could be a picture, could be music, in our case, what we’re really talking about is, it’s text. Right? You take text, you encode it into numbers. And then you push it through these knobs, and what are these knobs? Well these are really called parameters, or weights. I call them knobs because you can adjust them and that’s how you train this neural network, is by tweaking these knobs. These knobs are really similar when you think about tweaking them to tuning a radio station, right? Which has one knob, the tuning knob and you start with noise and you get it kind of close to the station and you can kind of hear it dialing in and then you tweak. You listen and then you tweak a little more and it sounds better. And you listen, you tweak a little more. Oh, you overshot, you come back, that’s how these things are trained too. You start with numbers on the left. You push it through these knobs, which is basically math – lots and lots of math. And you get numbers on the right. You look at the numbers on the right. Is that really what you expected? No, if it’s not, then you tweak the knobs and you do it again and you keep doing that, and tweaking the knobs until the numbers on the right are exactly what you expected and then you’ve got a trained neural network.

LLM for HS Classification: Adding Video Cards

So, that’s what you start with. And then what do you add? You add some video cards. Alright, so back in ’83, this was Donkey Kong. 2023, 40 years later, same video game, very different look. How did we get there? Well, the invention of video cards. In ’96 was the first 3D graphics card at 350,000 triangles per second. That’s TPS, you can find these numbers in the TPS report. In 2023, we have modern GPUs that have thousands of cores and can do several billions of triangles per second. And how do they do these triangles per second? Math. Everything that’s required to render this 2023 picture of Donkey Kong, which is all of the lighting and the shading and the 3D effects and all the surfaces and everything else; it’s all math. And coincidentally it’s the same math that’s required to generate those neural networks that we talked about on the previous page. So, you got the idea of a neural network, and then you feed it these really, really capable hardware things, which were driven by the gaming industry, which is a huge industry. And at some points, you know, some smart data scientists said, hey I can use these video cards to process my neural networks and, voila, AI was transformed. So, started with neural networks, added in some video card hardware, and then you tried it on language.

The First Successful Neural Network Language Model

So, in 2013 was the first successful neural network language model that was done by Google. Revolutionized the space because it had been done. It had been tried before, but it was too hard. Language was too hard because there’s so much nuances to it, there’s so many rules, there’s so much meaning, etc. We didn’t have enough capability, but once video cards came along we started using those for neural networks. Google was able to successfully do it. They did it in 2013, in 2017, four years later, they invented the transformer architecture, which was another seed change in the industry. That very next year, they released their very first transformer based neural language model, which is called Bert. You can see Bert in the upper left-hand corner of this picture here. It’s still around, still in use in lots of places today. Right on the heels of Bert, a company called, Open AI, which at that time was a non-profit organization, released their first transformer based language model called GPT-1. You can see it in there too. By the way, this picture, what’s represented on this picture is, how many knobs, weights parameters, whatever you want to call them, are in these language models. And you can see Bert and GPT-1, they’re just little specks compared to what we have today. The ones that we have today, which is only five years later are enormous. So, from 2018 with GPT-1 to 2022 with GPT-3.5 that’s only two generations. Each of those generations of GPT was a hundred times bigger than the previous one. That’s what ChatGPT is based on. You can see GPT-3, that generation on the left hand corner of the picture, that’s how big it is and that’s the thing that really took the world by storm. That’s the thing that caused the newspaper articles. That’s the thing that got everybody’s attention, until the granddaddy really of large language models came out just last month, actually a month and a half ago now, GPT-4. The next generation of GPT. This is the one that not only, did it spawn newspaper articles, it spawned an open letter by really important academics and technology of people that said stop, you’ve gone too far. This is enough. We need to take a break. Do we really need to? I don’t know, but that’s what happened. That’s how much splash this thing made. It is nominally a hundred times more powerful, well more knobs, not necessarily more power but 100 times more knobs than GPT-3. We don’t know because, Open AI hasn’t told us, but what they have told us is, that it’s 40% more likely to produce factual responses than ChatGPT, which makes us go um, I mean how many non-factual responses does ChatGPT reproduce that GPT-4 could be 40% better? That makes you scratch your head. But in any case, the picture on the right is kind of fun. This is exam scores from GPT-4. Both, ChatGPT which is three five in blue and GPT-4, which is in green. The one I’ve circled there is the bar exam. Your next Attorney is probably not GPT-4, but what probably is true, is that GPT-4 did better on the bar exam than your next Attorney, because it scored in the 90th percentile. Which means it scored better than 90% of the attorneys out there. So, that’s how you. Oh, you know what I missed on this slide, is the idea of, let it read the internet. This thing is trained on the entire internet and so it knows really everything that’s on the internet and it can respond like a human. As I said before, you have to take it with a grain of salt, you know, the old adage of, don’t believe everything you read on the Internet, is now kind of like, don’t believe everything that a chat bot read on the internet and is convincing you is true in a very convincing manner.

LLM for HS Classification

So, what about large language models for HS classification? Well, I’ll give it a try. Let’s just ask it “Hey, GPT-4 what’s the HTSUS classification of a can of chicken noodle soup?” It starts with hey, “I’m not a customs expert…” and a lot of disclaimer and then it goes on to say, “…you might want to start in chapter 16”. Well, I’m not a customs expert either but I’m pretty sure that a can of soup is in chapter 21 (specifically 2104.1060 like there). So, what I would recommend you do, is the last thing that GPT-4 told me, which is “consult a customs broker”. But where large language models do work well for classification is, take the input that you give it (can a chicken soup) find all the relevant sources and serve them up to you at your fingertips. Use those relevant sources to guide me to what the correct code is. You could argue that’s what it was doing; it was wrong. And let the user, the expert, decide what’s completely out to lunch in the information that’s giving me. So, not the picture on the left here, which is a robot taking over your job, but the picture on your right here, a tool that you can use to do a test classification.

Try Quickcode – Get Started Free

How do I know that this works well? Because I built one of these things. It’s called Quickcode. Here’s my shameless Quickcode plug. What does Quickcode do? It provides quick and accurate HS classification to experts. It incorporates state-of-the-art language models (the ones that we just talked about). It’s a human-in-the-loop HS classification system, which means it doesn’t do the classification for you. It uses those language models and that AI to consult all of the references and serve those references to you at your fingertips and to guide you using those references to the right classification and most importantly, it’ll help you do it in 60 Seconds. It helps you walk through kind of the same processes that you already do as an HS classifier, which is look at the schedule, look at the notes, look at the rulings, look at the WCO explanatory notes, synthesize all that information based on the thing that you’re trying to classify, and then come up with the right classification. It helps you do that same process that would have taken you tens of minutes, hours before and get it done in 60 seconds. I hope you’ll give us a try. You can sign up for our beta; we’re not live yet. We are starting next month but we’re in beta. Everybody can use it just go to that link, put in that code, which if you’re wondering is a cold water shrimp with a shell on. You can register with that code and try out our beta. What’s our takeaway? Number one, AI is not going away and is getting drastically better and more ubiquitous every single year or every single month it seems like. The best AI tools out there let humans do what they’re good at, which is understand the nuance of things, like HS classifications and let the AI do what it’s good at, which is synthesizing large amounts of data and serving them up to you. And then, the last thing is HS classifiers are not going to be replaced by AI, but they are going to be replaced by HS classifiers who use AI. That’s it, I think we’ll take some questions now. Hopefully there are some.

Learn More:

Will HS Classification Be Replaced by AI?